The source code of PhysFuzz is released at https://github.com/physfuzz/physfuzz

Deep reinforcement learning (DRL) is now the dominant control paradigm for humanoid locomotion, yet real-world deployment raises a range of safety concerns that go well beyond visible falls. Systematically surfacing the configurations that could trigger such concerns before physical deployment is a prerequisite for operational safety, but existing testing methods inherited from image-domain adversarial ML and software-only DRL fuzzing treat the policy observation as an unconstrained Euclidean vector, producing large numbers of physically invalid configurations whose reported crashes cannot be reproduced on hardware.

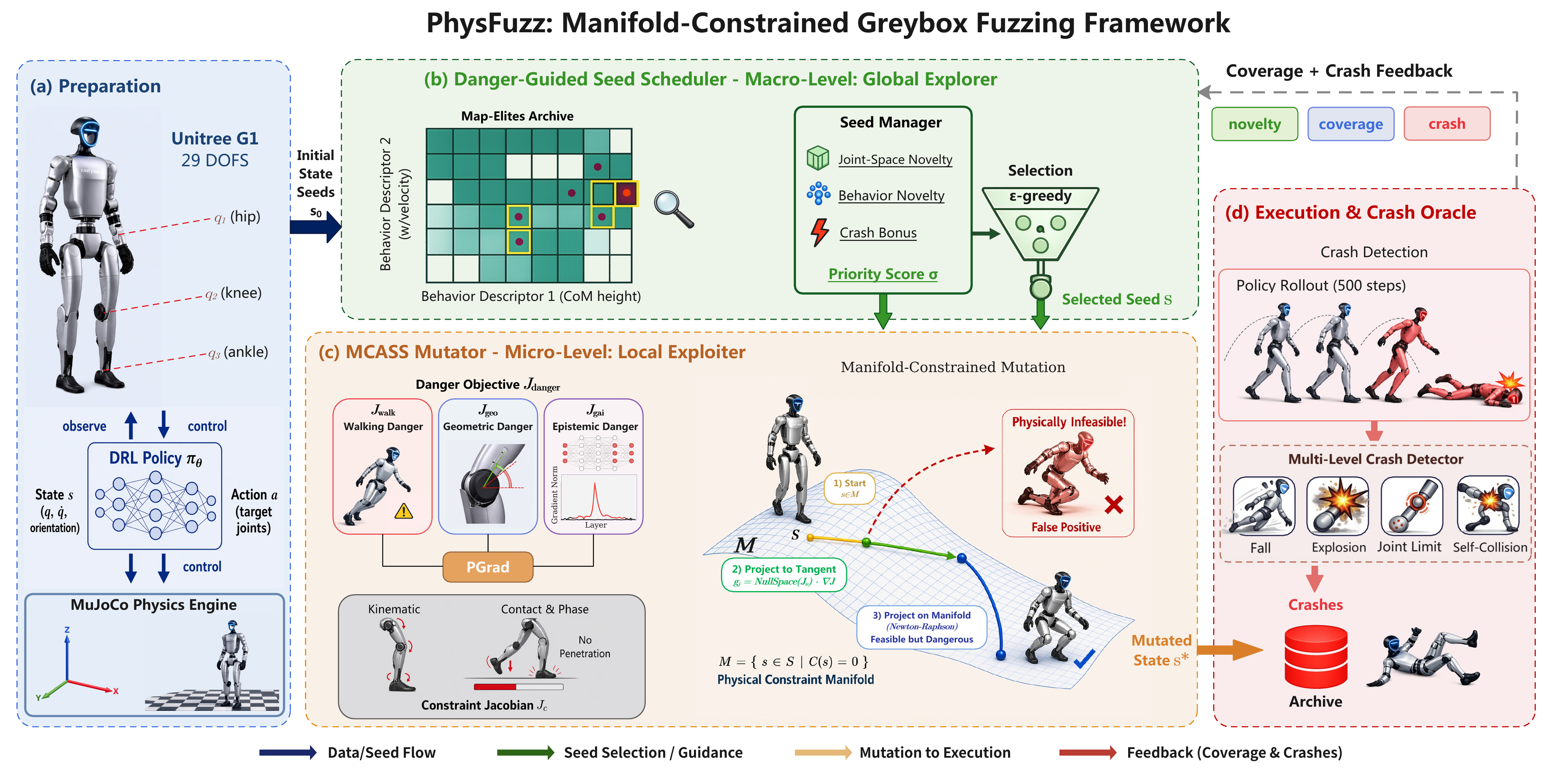

We present PhysFuzz, a physics-constraint-aware greybox fuzzer that audits DRL humanoid locomotion policies strictly within the manifold of physically realisable configurations. Its mutation primitive, Manifold-Constrained Adversarial State Search (MCASS), composes three kinematically distinct danger signals, projects each step onto the tangent space via null-space projection, and applies Newton–Raphson pull-back so that every intermediate state is physically realisable by construction.

Evaluated on the Unitree G1 humanoid across three locomotion policies of distinct architectures and frameworks, PhysFuzz discovers 3,072 to 7,479 unique physically realisable crashes per campaign with seed validity of 76% to 91%, triggering all five control-integrity channels on every policy. It outperforms five state-of-the-art baselines, and a representative subset of fall postures is reproduced on the physical Unitree G1.